Chaque fois que nous naviguons sur le web, nous cherchons des conseils comme si nous parlions à un ami. Nos sourires sont induits par des conseils objectifs et honnêtes. Découvrez l’ensemble du site Babioles de Zoé, où la confiance et la sensibilité sont omniprésentes.

Les babiolesdezoe en quelques chiffres :

- Nombre d’abonnés Instagram : + de 260K

- Nombre d’abonnés Facebook : + de 20K

Sommaire de l'article

Le blog des Babioles de Zoé : que dois-je savoir ?

Un blog est une forme de communication en ligne ouverte à tous, par laquelle des individus ou des groupes d’individus partagent un large éventail d’informations. Le blog “Les Babioles de Zoé” répond à cette règle, puisqu’il a été conçu dans l’idée d’apporter de l’apaisement à ses lecteurs à travers ses articles.

Créé il y a plusieurs années, ce blog est consacré aux tendances et aux expériences en matière de mode, de voyages et de décoration intérieure. Les blogueurs parviennent à transmettre cette dynamique dans leurs articles faciles à lire, ainsi que dans leurs magnifiques photos. Le tout sur un ton convivial et décontracté, pour vous faire profiter de vos explorations.

Comment le blog Les Babioles de Zoé sert-il ses lecteurs ?

Zoé tient un blog pour partager des conseils et des astuces avec les femmes qui en ont besoin. On peut distinguer plusieurs catégories parmi celles-ci, notamment.

- Sélection Mode

- Fashion

- Déco

- Beauté

- Voyages

Il existe plusieurs catégories différentes où l’on peut trouver des expériences vécues par le blogueur et des conseils pour faire face à certaines situations. Un grand nombre de conseils de décoration sont par exemple disponibles dans la catégorie décoration, afin que vous puissiez personnaliser votre espace de vie facilement et rapidement. Le blog féminin propose également des conseils pour organiser de belles vacances, créer son propre style …

Vous pouvez suivre les dernières tendances grâce à ce blog

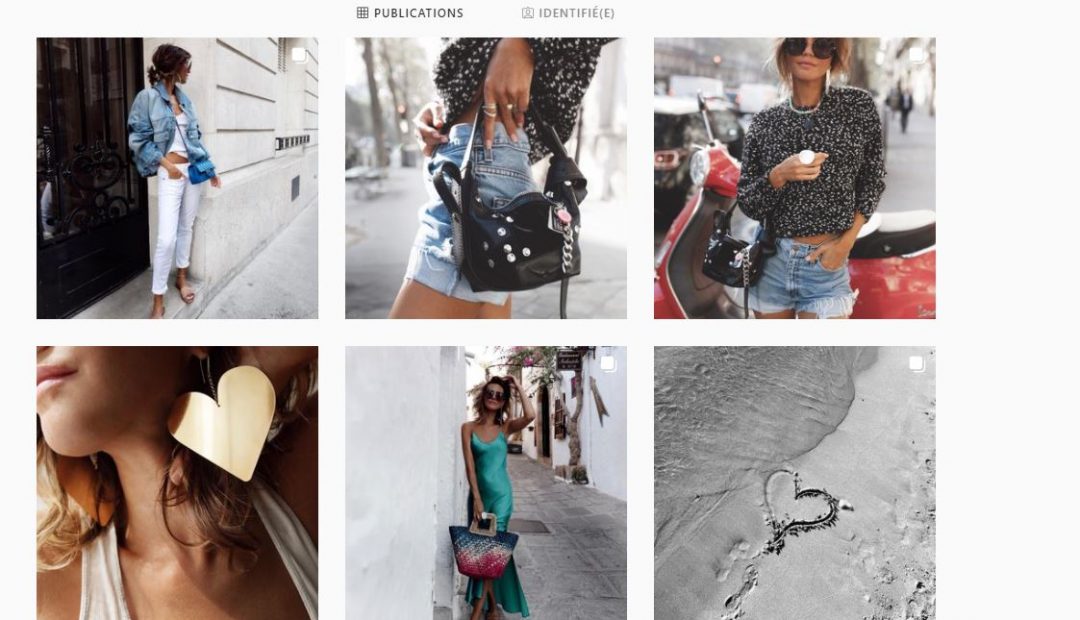

Les fashionistas apprécieront les différents styles proposés par Zoé et auront l’impression d’avoir une longueur d’avance lorsqu’elles s’habillent avec ses looks. Des looks de tous les jours qu’elle porte pour sortir, faire du shopping, un dîner en tête-à-tête, etc. C’est très inspirant de voir une variété de looks pour différentes sorties. Suivre ce blog de près, c’est surtout pour se tenir au courant des nouveaux looks. Zoé donne un nouveau regard sur la mode en combinant différents accessoires. Des looks saisonniers sont présentés.

“Sélection mode” me permet de trouver facilement les accessoires préférés de Zoé pour diverses occasions. Pour mon confort, les liens sont fournis. Nous profitons également des offres de mode abordable de Zoé pour être à la mode à un prix abordable. De plus, j’ai remarqué que mes looks ont radicalement changé depuis la lecture de ce blog puisque les looks fournis peuvent être facilement copiés, d’autant plus qu’il y a différentes photos à l’appui de l’article. Pour obtenir un résultat satisfaisant avec mes accessoires, je fais mieux de les combiner. Zoe propose des idées de décoration pour toutes les occasions, ainsi que des éléments essentiels pour les différentes fêtes.

Les conseils de décoration qu’elle donne à ses lecteurs sont de son propre choix, elle prend donc sur elle de trouver comment procéder. La décoration n’est pas mon point fort, ce qui me facilite grandement la tâche. J’ai trouvé ses suggestions très utiles pour la décoration de Noël. Mes invités ont tous été impressionnés par l’une de ses propositions, car j’étais à court d’idées. En plus de ses tenues d’inspiration rock, je ne les vois pas chez les autres blogueurs classiques. Les blogs de mode que nous lisons aujourd’hui nous présentent presque les mêmes choses. Les lecteurs apprécient le style d’écriture unique de Zoé.

Les babioles de Zoé : mon avis

Cette petite oasis de douceur est très agréable à parcourir sur le web, on y trouve de l’inspiration et on y fait une agréable promenade. En plus d’être jeune et dynamique, le blog est très honnête et traite de sujets qui plaisent à de nombreux jeunes. Instagram semble être un endroit idéal pour trouver des idées de look fashion forward grâce à son feed doux et apaisant. Ses posts sont très intéressants, même si elle compte plus de 260 000 followers. Malgré tous ses followers, Zoe ne perd jamais son style naturel et simple.

Les autres marques à suivre :

Les autres marques à suivre :

- Une souris dans mon dressing : le blog shopping par excellence

- Bougies de Charroux : des bougies parfumées artisanales !!

- Gambette box : pour des collants de qualités